MuMTAffect: A Multimodal Multitask Affective Framework for Personality and Emotion Recognition from Physiological Signals

Oct 27, 2025· ,,,·

0 min read

,,,·

0 min read

Meisam Jamshidi Seikavandi

Fabricio Batista Narcizo

Ted Vucurevich

Andrew Burke Dittberner

Paolo Burelli

Abstract

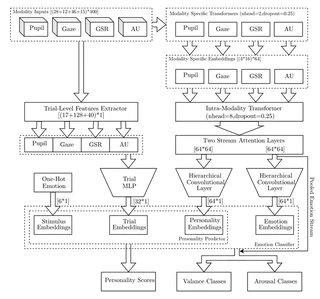

We present MuMTAffect, a novel Multimodal Multitask Affective Embedding Network designed for joint emotion classification and personality prediction (re-identification) from short physiological signal segments. MuMTAffect integrates multiple physiological modalities pupil dilation, eye gaze, facial action units, and galvanic skin responseusing dedicated transformer-based encoders for each modality and a fusion transformer to model cross-modal interactions. Inspired by the Theory of Constructed Emotion, the architecture explicitly separates core-affect encoding (valence–arousal) from higher-level conceptualization, thereby grounding predictions in contemporary affective neuroscience. Personality-trait prediction is leveraged as an auxiliary task to generate robust, user-specific affective embeddings, significantly enhancing emotion recognition performance. We evaluate MuMTAffect on the AFFEC dataset, demonstrating that stimulus-level emotional cues (Stim Emo) and galvanic skin response substantially improve arousal classification, while pupil and gaze data enhance valence discrimination. The inherent modularity of MuMTAffect allows effortless integration of additional modalities, ensuring scalability and adaptability. Extensive experiments and ablation studies underscore the efficacy of our multimodal multitask approach in creating personalized, context-aware affective computing systems, highlighting pathways for further advancements in cross-subject generalization.

Type

Publication

In 3rd International Workshop on Multimodal and Responsible Affective Computing

Multimodal Emotion Recognition

Personality Prediction

Physiological Signals

Transformers

Multitask Learning

Cognitive Modeling

Affective Computing

Theory of Constructed Emotion

Authors

Fabricio Batista Narcizo

(he/him)

Senior AI Research Scientist

Fabricio Batista Narcizo is a Senior AI Research Scientist in the Video Technology department at GN Hearing A/S (Jabra) and a Part-Time Lecturer and Course Manager at the IT University of Copenhagen (ITU). He received his Ph.D. degree in Computer Science from the ITU in 2017, his M.Sc. degree in Electronic & Computer Engineering from the Aeronautics Institute of Technology (ITA) in 2008, and his B.Sc. degree in Computer Science from the University of Western Santa Catarina (UNOESC) in 2005. His research interests lie in computer vision, image analysis, artificial intelligence, data science, data mining, machine learning, edge AI, and human-computer interaction, with a particular interest in eye-tracking.