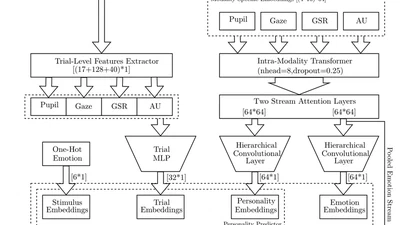

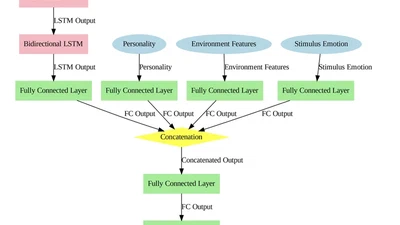

MuMTAffect: A Multimodal Multitask Affective Framework for Personality and Emotion Recognition from Physiological Signals

We present MuMTAffect, a novel Multimodal Multitask Affective Embedding Network designed for joint emotion classification and personality prediction (re-identification) from short …

meisam-jamshidi-seikavandi